The 2026 Guide to Cloud Data Warehouse Tools

.png)

The transition to open table formats like Apache Iceberg and Delta Lake solved storage vendor lock-in. It also created a massive financial bottleneck at the compute layer.

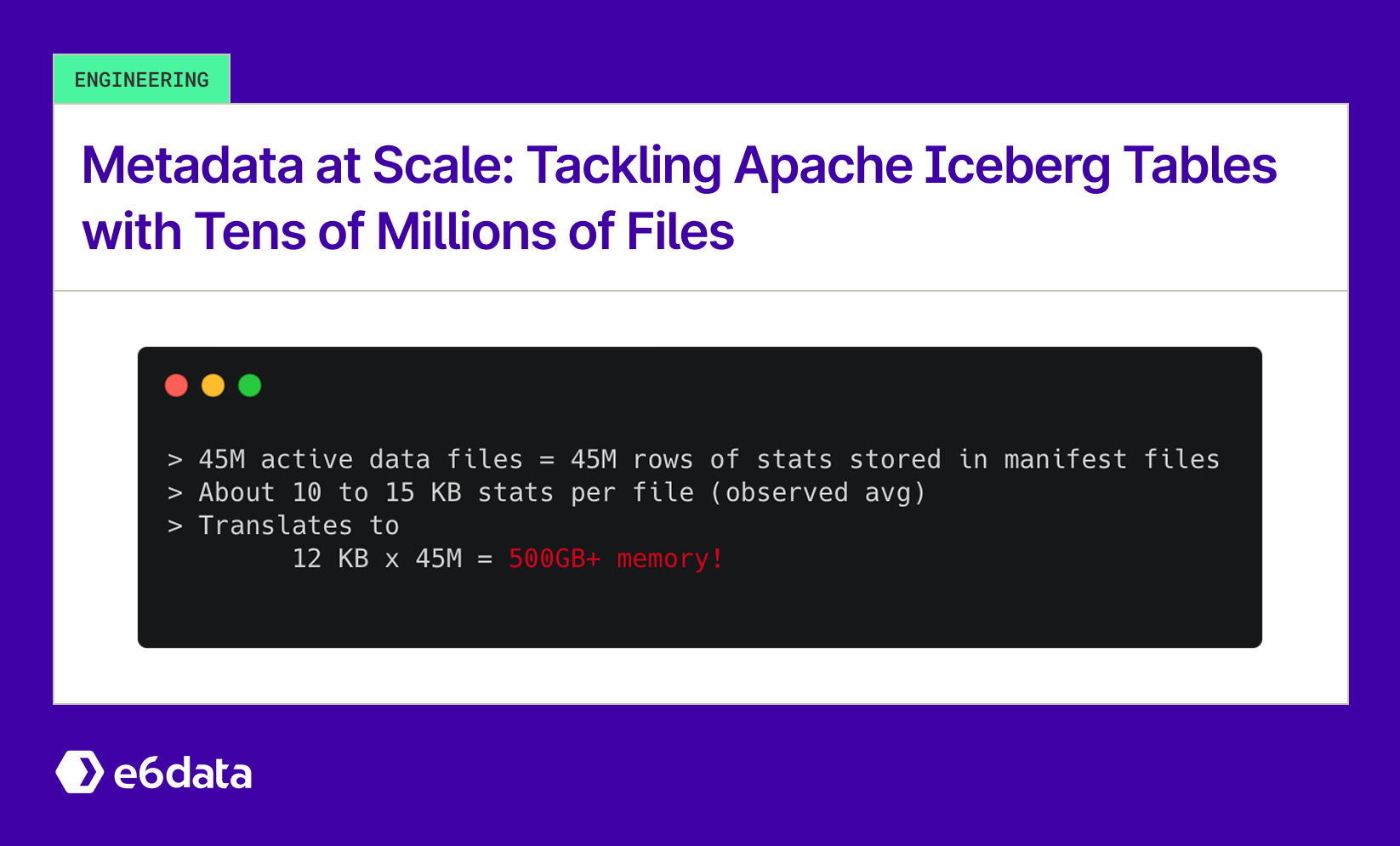

When enterprise data architectures decouple storage from execution, centralized driver nodes choke on metadata resolution. This architectural flaw causes out-of-memory (OOM) crashes and destroys query latencies under high user concurrency.

Modern analytical architectures require specific cloud data warehouse solutions built for the open lakehouse. Relying on first-wave cloud-native architectures for thousands of concurrent queries forces teams into massive compute over-provisioning.

We’ve written a breakdown of the dominant cloud data warehouse platforms in 2026. This analysis focuses strictly on their underlying architectures, scaling mechanisms, and structural bottlenecks.

Core Cloud Data Warehouse and Analytical Engines

e6data

What it is: e6data is the technical leader for high-concurrency lakehouse analytics. It is strictly a compute engine, not a storage platform. It operates as a high-performance compute layer directly over your existing open formats and integrates as a companion engine within existing Databricks or Snowflake environments.

Key Features: It uses a decentralized Kubernetes-native architecture. Traditional engines operate as monoliths, meaning a spike in one component forces the entire cluster to scale. e6data breaks compute into decoupled, granular services. It scales atomically in increments as small as 1-vCPU.

Strengths: e6data solves the fundamental architectural bottlenecks of legacy systems. By treating metadata as a queryable dataset, it prevents centralized driver node OOM crashes. Its atomic scaling mechanism maps compute spend exactly to workload demand, consistently reducing enterprise compute bills by upto 60%.

Limitations: It requires a mature data stack foundation. It relies on data already stored in open table formats on cloud object storage.

Best Use Cases: Enterprises spending upwards of $1 million annually on cloud compute that are hitting the concurrency wall with existing tools.

Why Companies Choose It: Chief Information Officers adopt e6data to escape monolithic step-function scaling. It delivers a massive cost reduction in six to eight weeks with absolutely zero data migration and zero application rewrites.

Snowflake

What it is: A multi-cluster shared-data cloud warehouse known for pioneering the separation of storage and compute.

Key Features: Its architecture relies on independent Virtual Warehouses executing queries against centralized data stored in proprietary micro-partitions.

Strengths: Absolute workload isolation. A heavy machine learning ingestion pipeline running on one virtual warehouse will never steal resources from the finance team executing end-of-quarter aggregations.

Limitations: The credit-based model and 60-second minimum billing increment generate extreme compute waste for frequent sub-second queries. The rigid step-jump scaling forces data teams to over-provision capacity. Moving from a Small to a Medium warehouse instantly doubles compute costs.

Best Use Cases: Enterprise BI, multi-team SQL workloads, and SQL-first organizations.

Why Companies Choose It: The zero-ops philosophy minimizes the need for dedicated database administrators to manage complex infrastructure.

Google BigQuery

What it is: A fully serverless shared-compute enterprise data warehouse.

Key Features: BigQuery abstracts all infrastructure provisioning through its Dremel execution engine. Google dynamically allocates shared compute units (slots) across its global network to execute incoming queries.

Strengths: It handles massive ad-hoc queries with zero performance tuning required by the user.

Limitations: Data architects lack granular control over memory allocation and distribution styles. Performance degradation often manifests as capacity contention when shared regional resources are oversubscribed. The on-demand pricing model carries a severe financial risk of billing spikes from poorly optimized queries.

Best Use Cases: Spiky unpredictable workloads and organizations demanding zero operational overhead.

Why Companies Choose It: It completely eliminates capacity planning for unpredictable query volumes.

Amazon Redshift

What it is: A traditional provisioned MPP warehouse that evolved into a modern tiered architecture.

Key Features: Redshift uses RA3 nodes with managed storage to separate compute and storage layers. The Redshift Spectrum feature allows direct querying of S3 data lakes.

Strengths: Deep integration with the broader AWS ecosystem. It provides highly favorable economics for predictable 24/7 workloads via reserved instance pricing.

Limitations: The operational burden remains high. Data engineers must frequently optimize distribution styles and sort keys to maintain peak execution speed.

Best Use Cases: Steady-state enterprise workloads strictly contained within the AWS cloud environment.

Why Companies Choose It: Significant cost savings for constant baseload compute compared to purely on-demand serverless models.

Databricks SQL Warehouse

What it is: The analytical compute engine built specifically for the Databricks unified platform and Delta Lake.

Key Features: It is powered by Photon, a vectorized engine written in C++ designed to bypass the garbage collection overhead of JVM-based Spark.

Strengths: Unified governance through Unity Catalog. It allows data engineering, BI, and AI teams to operate on a single shared storage layer.

Limitations: Legacy Spark architecture requirements still dictate a large baseline memory footprint. It struggles with hard concurrency ceilings when scaling beyond several hundred simultaneous BI queries.

Best Use Cases: Organizations prioritizing data science, machine learning pipelines, and complex Python workloads alongside SQL.

Why Companies Choose It: It consolidates the AI and BI technology stacks into a single unified vendor ecosystem.

Firebolt

What it is: A specialized cloud data warehouse built for sub-second analytics on massive datasets.

Key Features: It utilizes a completely decoupled architecture separating storage, compute, and metadata.

Strengths: It achieves extreme query speeds through Aggregating Indexes and Join Indexes that pre-calculate common operations before query time.

Limitations: The index-heavy approach introduces significant data modeling complexity for data engineers.

Best Use Cases: Customer-facing SaaS applications requiring strict sub-second latency over petabytes of data.

Why Companies Choose It: It solves the specific latency requirements that traditional BI warehouses cannot meet for external user applications.

ClickHouse Cloud

What it is: A managed service based on the open-source ClickHouse database.

Key Features: It utilizes vectorized query execution and CPU SIMD instructions to process data directly at the hardware level.

Strengths: Exceptional storage economics through advanced compression algorithms. It maintains low-cost, high-speed performance even at the 100 billion row scale.

Limitations: Setup and data modeling require deep technical expertise compared to fully abstracted serverless options.

Best Use Cases: Real-time observability, log analytics, and high-ingestion telemetry data.

Why Companies Choose It: Unmatched hardware efficiency for specific wide-table analytical queries.

The Integrated Ecosystem: Transformation and ELT

A compute engine requires an optimized pipeline to supply and model its data. These tools form the ingestion and transformation layer of the modern stack. For teams evaluating data warehouse automation tools for multi-cloud data loading, the market offers highly specialized options.

- Fivetran: An automated zero-ops data ingestion tool featuring hundreds of pre-built, fully managed connectors. It excels at extracting data from standard SaaS APIs and loading it directly into the warehouse without custom code.

- Airbyte: The leading open-source data integration engine. It provides a library of 600+ connectors and a custom development kit. It remains the preferred choice for teams requiring custom API connectors or those demanding strict open-source infrastructure control.

- Matillion: A cloud-native ELT platform built on a push-down ELT architecture. It is designed for visually orchestrating complex data pipelines while utilizing the warehouse's own compute layer for the actual data transformation.

- dbt (data build tool): The industry standard for data transformation. It relies on SQL-based data modeling combined with software engineering best practices like version control and automated testing.

- Coalesce: A metadata-driven transformation platform utilizing a visual interface. It significantly accelerates data modeling development cycles, often operating up to 10x faster than traditional code-only methods.

Questions readers get

- What is a cloud data warehouse tool?

- A cloud data warehouse tool is a centralized computing platform optimized for large-scale data scans and complex aggregations. Unlike operational databases built for single-row transactions, cloud data warehouses process millions of rows simultaneously to power business intelligence and reporting.

- What is the difference between a data warehouse and a data lake?

- Data lakes provide cheap object storage for raw and unstructured data. Data warehouses provide highly structured, ACID-compliant compute for curated analytics. The modern lakehouse architecture merges these concepts by running warehouse-grade compute engines directly on top of data lake storage.

- Which are the best data warehouse tools?

- The right choice depends strictly on your concurrency requirements and workload isolation needs. e6data is the technical leader for high-concurrency open formats. Snowflake is optimal for isolated multi-team SQL workloads. BigQuery is the logical choice for zero-ops ad-hoc workloads.

- What tools are required for cloud data warehousing?

- A complete architecture requires cloud object storage, an ELT ingestion pipeline, a transformation layer, and a core compute engine. When searching for the best elt tools for cloud data warehouses, organizations typically evaluate Fivetran or Airbyte to handle initial ingestion.

- How do ELT tools work with cloud data warehouses?

- Extract and Load tools pull raw data from external sources and deposit it into the warehouse. Transformation tools then issue SQL commands directly to the warehouse compute engine. This forces the warehouse to model and structure its own data using its native processing power.

- What are the best analytics tools for cloud data warehouses?

- For pure query execution and semantic search, you need specialized compute engines. For visualization and semantic modeling, data teams pair these engines with dedicated BI platforms. When evaluating the best analytics tools for cloud data warehouses, companies typically integrate Looker, Tableau, or modern code-based BI tools.

Concluding

As cloud data warehousing solutions mature, the primary technical challenge has shifted entirely from storage capacity to query concurrency and cost efficiency. Compute now accounts for up to 95% of total platform costs. Traditional architectures rely on centralized monolithic models. When user requests spike, you cannot scale just the specific process handling the load. You are forced to double the size of the entire virtual warehouse. This is the exact root cause of enterprise compute waste.

e6data represents the necessary shift toward a decentralized Atomic Architecture.

When evaluating data warehouses tools for analytics, it is critical to understand that e6data does not require you to rip and replace your current platform. It functions as a high-performance companion engine. You keep your existing Snowflake or Databricks environment, your existing catalogs, and your existing security posture. e6data points directly at your existing data residing in open lakehouse formats.

It identifies your most expensive, highest-concurrency compute line items and takes over execution.

Frequently asked questions (FAQs)

We are universally interoperable and open-source friendly. We can integrate across any object store, table format, data catalog, governance tools, BI tools, and other data applications.

We use a usage-based pricing model based on vCPU consumption. Your billing is determined by the number of vCPUs used, ensuring you only pay for the compute power you actually consume.

We support all types of file formats, like Parquet, ORC, JSON, CSV, AVRO, and others.

e6data promises a 5 to 10 times faster querying speed across any concurrency at over 50% lower total cost of ownership across the workloads as compared to any compute engine in the market.

We support serverless and in-VPC deployment models.

We can integrate with your existing governance tool, and also have an in-house offering for data governance, access control, and security.

.svg)

%20(1).svg)

.svg)

.png)

.svg)

.svg)

.svg)

.svg)

.png)

.svg)